Loading...

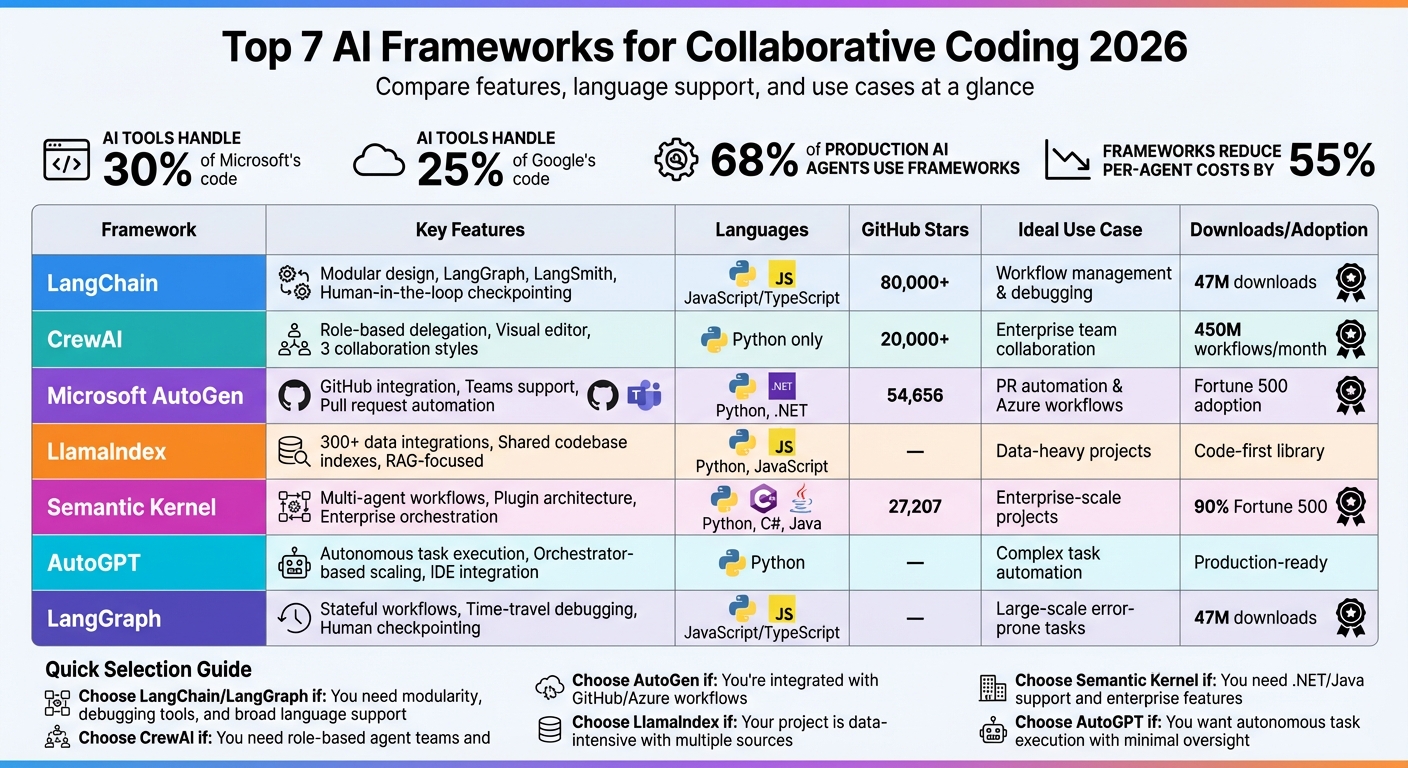

AI tools now handle 30% of Microsoft’s code and 25% of Google’s, but managing multiple AI agents in coding projects has created new challenges. From coordination bottlenecks to context fragmentation, developers need AI-powered coding guidance and frameworks that ensure smooth collaboration, maintainable code, and reduced "review debt" caused by unclear AI-generated updates. Here’s a breakdown of the top frameworks for tackling these issues in 2026:

Quick Comparison:

| Framework | Key Features | Languages Supported | Ideal Use Case |

|---|---|---|---|

| LangChain | Modular design, LangGraph, LangSmith | Python, JavaScript/TS | Workflow management, debugging |

| CrewAI | Role-based delegation, visual editor | Python | Enterprise team collaboration |

| Microsoft AutoGen | GitHub integration, Teams support | Python, .NET | Pull request automation |

| LlamaIndex | Data-driven collaboration | Python, JavaScript | Data-heavy projects |

| Semantic Kernel | Multi-agent workflows, plugins | Python, C#, Java | Enterprise orchestration |

| AutoGPT | Task automation, orchestration | Python | Complex task execution |

| LangGraph | Stateful workflows, checkpointing | Python, JavaScript/TS | Large-scale, error-prone tasks |

Key Takeaway: Frameworks like LangChain and LangGraph excel in modularity and debugging, while CrewAI and Semantic Kernel cater to enterprise needs. Choose based on your team's language preferences, project complexity, and collaboration requirements.

AI Frameworks for Collaborative Coding 2026: Feature Comparison Chart

You can also discover the best AI tools for development and automation in our curated directory.

Explore AI Tools on Flaex.ai

Discover the best AI tools for your workflow, curated, reviewed, and ranked.

Browse Directory →

LangChain has become a go-to framework for developers, boasting 47 million downloads on PyPI as of January 2026. Its modular design allows teams to focus on specific components like vector stores, LLM prompts, or retrieval logic independently, making it a favorite in the AI development space.

LangChain offers several tools that make collaboration seamless. For example, its LangGraph extension manages workflows by maintaining context, even through loops and retries. It also includes human-in-the-loop checkpoints, ensuring safe updates when multiple agents are working on production code. Another standout feature is LangSmith, which centralizes tracing and monitoring, simplifying collaborative debugging. Teams can even use Mermaid diagrams for visual debugging, which helps them quickly map out decision trees and agent logic.

"Frameworks are where innovation happens. Platforms are where deployment happens. The best teams use both." – Harrison Chase, CEO of LangChain

LangChain’s compatibility with a wide range of tools and platforms further strengthens its appeal for collaborative projects, often requiring an AI project advisor to navigate complex tool selections.

LangChain supports Python and JavaScript/TypeScript, making it accessible to a broad range of developers. It integrates smoothly with popular IDEs like VS Code and JetBrains . The framework is part of a vast ecosystem, offering over 700 integrations with vector stores, LLMs, and other tools. It also uses the Model Context Protocol (MCP), which sets a universal standard for connecting agents to external tools.

LangChain’s design makes it ideal for scaling in team settings. Organizations using dedicated frameworks like LangChain report 55% lower per-agent costs compared to relying solely on platforms. Its modular structure reduces vendor lock-in, allowing teams to switch LLM providers or vector stores without overhauling their codebase. LangSmith further streamlines operations by handling tracing, error monitoring, and cost management. While LangChain has a steep learning curve, especially when using advanced LangGraph features, teams can achieve precise control over error recovery and branching logic with adequate effort .

LangChain’s popularity is evident in its widespread adoption. Around 68% of production AI agents use frameworks like LangChain. The number of agent framework repositories with over 1,000 stars grew by 535% between 2024 and 2025, highlighting the industry’s shift toward graph-based orchestration. LangChain offers a free tier for open-source users, with paid plans starting at $39/month.

CrewAI models human team structures by assigning agents specific roles, such as researcher, coder, or tester, complete with backstories that reinforce their functions. This design makes it intuitive to manage multiple agents, especially during complex coding tasks.

One of CrewAI's standout abilities is task delegation. Agents can independently pass tasks to others, ask clarifying questions, and collaborate to tackle multi-step problems. The framework supports three collaboration styles: sequential, hierarchical, and consensus-based. Its "Crews" architecture organizes agents into teams, while "Flows" manage event-driven processes and conditional logic, ensuring smooth coordination. Additionally, built-in memory systems allow agents to learn from past interactions, enhancing their teamwork over time.

"CrewAI allows you to define agents with specific roles (like researcher, writer, analyst) and backstories, making the system more intuitive and aligned with how human teams work." – AlphaMatch

CrewAI is exclusively a Python framework, requiring version 3.10 or higher (up to 3.14). It integrates seamlessly with popular Python IDEs like VS Code, PyCharm, and Jupyter Notebooks. A key feature is CrewAI Studio, a visual editor that lets users design and manage agent teams without writing code, making it accessible even for non-technical users. For developers, the CLI tool (crewai create crew) simplifies project setup with YAML-configured agent definitions, streamlining the process.

CrewAI is built for enterprise-level scalability through its Agent Management Platform (AMP). This platform includes tools for monitoring, role-based access control, and serverless container deployment. Currently, the framework supports over 450 million agent workflows per month. Its impact is evident in real-world applications: PwC improved code-generation accuracy from 10% to 70% using CrewAI workflows, General Assembly reduced curriculum development time by 90%, and DocuSign cut its lead "time-to-first-contact" by 75% after deploying CrewAI agents for data analysis.

CrewAI has garnered over 20,000 GitHub stars as of early 2026, reflecting its strong developer support. More than 100,000 developers have completed CrewAI certification programs. The framework is available as a free open-source version, with managed cloud and private infrastructure options for enterprise teams. Up next, we’ll dive into Microsoft AutoGen, which takes multi-agent orchestration to another level.

Microsoft AutoGen integrates AI agents directly into GitHub's pull request workflow. These agents handle tasks like creating branches, writing commit messages, and generating pull request descriptions, all while keeping a detailed audit trail of their actions.

The framework is designed to support workflows that involve human oversight, utilizing patterns like Sequential, Switch-case, and Loop. These patterns allow team members to step in during more intricate tasks. One standout feature is its integration with Microsoft Teams. Developers can launch agent sessions or open pull requests directly from Teams channels by tagging "@GitHub." The agent then uses the entire conversation thread as context. For distributed teams, AutoGen offers persistent cloud sessions, while also allowing local agents to assist with brainstorming before transitioning to the cloud for creating pull requests [32,34].

AutoGen v0.4 currently supports Python 3.10+ and .NET, with more languages in development [37,40]. It integrates seamlessly with Visual Studio Code through the AI Toolkit and GitHub Copilot extensions. Additionally, it works with IDEs like Visual Studio, JetBrains, Xcode, and Eclipse. For quick prototyping, AutoGen Studio provides a web-based, low-code interface for building agent teams with top AI tools [37,38]. Security-focused teams can execute model-generated code securely within Docker containers using the DockerCommandLineCodeExecutor [37,39].

AutoGen uses YAML definitions to manage agents, enabling teams to track and collaborate on agent behavior through standard Git workflows and version control. Since agent tasks rely on GitHub Actions minutes and premium requests, setting billing alerts is recommended to keep resource usage in check. Full access to features requires GitHub Copilot Pro, Pro+, Business, or Enterprise plans. For larger teams, the VS Code extension speeds up agent management with tools like full-text search and keyboard shortcuts, bypassing the slower web interface.

As of early 2026, AutoGen has earned 54,656 GitHub stars, reflecting strong community support. While the framework is open-source and free to use [39,40], enterprise features require paid Copilot licenses. AutoGen v0.4 also introduces a major shift to an asynchronous, event-driven architecture, improving its scalability and reliability.

Up next, delve into LlamaIndex's approach to data-driven collaboration.

LlamaIndex bridges the gap between AI agents and your team's data sources, making it a go-to choice for data-driven collaborative coding. With connections to over 300 data sources via LlamaHub - including Slack, APIs, databases, and PDFs - it enables AI assistants to access and utilize context from wherever your team stores its information.

LlamaIndex addresses one of the biggest challenges in large-scale projects: onboarding. Its shared codebase indexes allow new developers to get up to speed in minutes instead of hours. The sub-question engine further simplifies complex queries by breaking them into smaller, manageable retrieval steps. Additionally, its memory feature ensures that key decisions and context are saved and shared across the entire team, keeping everyone aligned.

As a code-first library, LlamaIndex supports Python and JavaScript/TypeScript, making it ideal for modern web and data science projects. It integrates effortlessly into existing development environments, giving developers granular control while building data-aware agents. These agents are capable of synthesizing information from multiple knowledge sources. LlamaIndex offers a free tier with 10,000 credits, with paid plans starting at $50 (Starter) and $500 (Pro), along with custom enterprise options for larger teams.

For enterprise projects, LlamaIndex helps maintain consistency through version-controlled rules. Teams can establish shared standards using repository files like .cursor/rules or CLAUDE.md to align both coding practices and agent behavior. Rated 4/5 for ecosystem size among major AI agent frameworks in 2026, LlamaIndex is production-ready and has a moderate learning curve. It excels at building internal team copilots capable of answering complex questions by querying company databases and communication tools simultaneously.

LlamaIndex benefits from a robust community, bolstered by its extensive integration ecosystem. While its technical nature - requiring Python and AI knowledge - might make it less accessible than low-code alternatives, this complexity translates into greater flexibility for advanced data integration scenarios. Its focus on retrieval-augmented generation (RAG) tools makes it especially effective for teams needing AI agents to pull data from multiple internal sources seamlessly.

Next, explore how Semantic Kernel further refines collaborative AI development.

Building on LlamaIndex's focus on data-driven solutions, Semantic Kernel takes a different path by emphasizing enterprise-level orchestration. Microsoft's framework is designed for large-scale, collaborative coding environments, making it a practical option for production-grade projects. As of February 2026, the project has garnered 27,207 GitHub stars and contributions from over 300 developers, while adding less than 50 ms latency to LLM calls.

Semantic Kernel's plugin-based architecture allows developers to package business logic and API calls into reusable modules. Its multi-agent orchestration supports workflows like sequential, concurrent, handoff, and group chats, enabling specialized agents - such as Coder, Critic, and Planner - to tackle complex tasks. The framework also includes a prompt management system that handles versioning, testing, and deployment of prompts, treating them with the same rigor as code.

"Semantic Kernel represents our vision for making AI integration as natural as calling any other API. We wanted to give developers the tools to compose AI services the same way they compose microservices." - John Maeda, Former VP of Design and AI at Microsoft

Semantic Kernel offers robust support for C#, Python, and Java, with C# enjoying the most comprehensive feature set. It integrates seamlessly with popular IDEs like Visual Studio Code, Cursor, Windsurf, and JetBrains IDEs. A dedicated VS Code extension simplifies editing agent configurations and prompts. Additionally, the framework supports the Model Context Protocol (MCP), allowing teams to standardize how agents access tools and data sources. These features make it an appealing choice for teams working on complex, distributed projects.

Designed for distributed teams, Semantic Kernel optimizes performance with features like connection pooling, request batching, and intelligent retries to handle API costs and high traffic. Its architecture is built to support dependency injection, middleware, and telemetry integration. The unified agent abstraction - AIAgent in .NET and BaseAgent in Python - ensures flexibility, allowing teams to switch providers without rewriting core logic. Released under the MIT license, the framework is free for commercial use, though LLM API usage is billed separately based on token consumption.

Semantic Kernel has amassed 27.3k stars and 4.5k forks on GitHub, with over 2,500 projects relying on it. It’s used by more than 230,000 organizations, including 90% of Fortune 500 companies, through tools like Copilot Studio powered by Semantic Kernel. While LangChain leads in community size with over 80,000 stars, Semantic Kernel stands out in production settings due to its Azure integration and strong typing in C#.

"The rise of frameworks like Semantic Kernel signals a shift from AI as a research tool to AI as a fundamental component of enterprise software infrastructure." - Dr. Michael Torres, AI Research Director at Gartner

AutoGPT has become a go-to tool for automating intricate coding workflows. The shift from basic autocomplete tools to autonomous agents capable of completing entire features has reshaped how developers tackle collaborative projects.

AutoGPT builds on earlier frameworks by focusing on breaking down large tasks into smaller, manageable steps that agents can execute independently. Its orchestrator-based scaling allows multiple agents to work simultaneously on large-scale refactors or feature implementations. This means teams can deploy fleets of agents to handle different parts of a project at the same time, reducing delays caused by bottlenecks. Tight integration with IDEs ensures a smoother workflow, making this autonomy even more effective.

The framework works seamlessly with Visual Studio Code, offering unified Chat and Sessions views to manage multiple agent sessions in one place. By 2026, VS Code's architecture has been optimized for smooth interactions with autonomous agents, enabling developers to oversee and guide agent activities directly from their editor. AutoGPT also uses MCP servers to facilitate smooth communication with code repositories.

To scale AutoGPT effectively, teams need to provide full access to repositories, documentation, and project history. This ensures agents can make well-informed decisions. The framework's orchestration features enable multiple agents to work on different tasks simultaneously, but careful coordination is essential to avoid conflicts in shared codebases. For teams handling complex projects, AutoGPT excels at managing automated refactors and completing features. However, successful scaling requires maintaining clear boundaries for each agent's context. These capabilities position teams to take on increasingly automated and intricate projects.

LangGraph is designed to handle production-level, stateful workflows with ease. Its graph-based structure supports loops, branching, and error recovery, making it ideal for collaborative coding. Back in February 2026, developer Prashanth created "Agentic IDE", a VS Code extension powered by LangGraph. This tool utilized a multi-agent system with a FastAPI backend and a TypeScript frontend, coordinating five specialized agents. To ensure stability, safeguards like a 5-minute timeout and a 50-request-per-hour limit were implemented.

LangGraph introduces human-in-the-loop checkpointing, allowing agents to draft code that teams can review before changes are finalized. Teams can pause workflows at critical moments - such as when opening pull requests or altering databases - to maintain quality and avoid mistakes. Its standout "time-travel" debugging feature lets developers retrace agent actions, revert to earlier states, and experiment with alternative solutions without restarting the entire workflow. Andres Torres, Sr. Solutions Architect, highlighted its flexibility:

"LangGraph enables granular control over the agent's thought process, empowering data-driven decisions to meet diverse needs".

LangGraph supports Python and JavaScript/TypeScript, two leading languages in AI development. It integrates seamlessly with VS Code, utilizing stateful backends such as FastAPI for Python or WebSockets for real-time updates. The framework also supports the Model Context Protocol (MCP), connecting it to a network of over 700 AI tools, including vector stores, LLMs, and retrievers. This broad compatibility ensures teams can build scalable and efficient collaborative systems.

LangGraph is built for high-concurrency environments, offering fault-tolerant scalability through horizontally scalable servers and task queues. PostgreSQL checkpointing keeps workflows intact, even after server restarts, allowing team members to pick up where they left off - even after significant delays. Concurrency controls, like double-texting prevention and thread management, ensure smooth operation when multiple developers or agents work simultaneously.

LangGraph Studio takes collaboration a step further with its visual IDE. This tool makes it simple to prototype, debug, and share agent architectures. Teams can design and refine complex multi-agent systems visually, cutting down on the need for repetitive boilerplate code. It’s a powerful addition that streamlines the development process and encourages team collaboration.

Below is a comparison of seven popular AI frameworks designed for collaborative coding. This table highlights their key features, supported programming languages, and scalability, offering a quick overview of their strengths.

LangChain and LangGraph dominate in adoption, with an impressive 47 million downloads each. CrewAI stands out for its role-based delegation, where specialized agents manage distinct tasks, making it ideal for enterprise-level operations using Python. Meanwhile, Microsoft AutoGen introduces GroupChat for multi-agent collaboration, offering seamless integration with Azure and support for Python and .NET.

| Framework | Collaborative Features | Language Support | Team Scalability |

|---|---|---|---|

| LangChain | Human-in-the-loop checkpointing | Python, JavaScript/TypeScript | High (47M downloads) |

| CrewAI | Role-based delegation | Python | High (Enterprise hosting) |

| Microsoft AutoGen | GroupChat conversations | Python, .NET | High (Azure) |

| LlamaIndex | Shared codebase indexes | Python, JavaScript | – |

| Semantic Kernel | Version-controlled rule files | Python, C#, Java | – |

| AutoGPT | Autonomous task execution | Python | – |

| LangGraph | Human-in-the-loop checkpointing | Python, JavaScript/TypeScript | High (47M downloads) |

Semantic Kernel provides support for Python, C#, and Java, catering to diverse programming needs. LlamaIndex simplifies knowledge sharing, making it easier for new team members to get up to speed. Lastly, AutoGPT focuses on autonomous task execution, streamlining workflows for teams.

Selecting the right AI framework can significantly improve team productivity, reduce costs, and ensure that your codebase is maintainable. Based on the comparisons outlined in this guide, your decision should align with your team's workflow, technical expertise, and specific production needs. Whether it's LangChain with its impressive 47 million downloads, CrewAI, or Microsoft AutoGen, the ideal choice depends on how well the framework complements your team's strengths and project goals.

Consider your team's requirements carefully. For Python-focused teams working on data-intensive projects, options like LlamaIndex or LangChain are strong contenders. On the other hand, Semantic Kernel offers robust support for teams using .NET or Java. It's worth noting that while agent-based frameworks can lower per-agent costs by 55%, they often come with 2.3x higher initial setup times compared to simpler platform-only solutions.

Run tests on real MVPs to gauge how each framework handles production challenges, error management, and cost efficiency. Keep a close eye on LLM API usage, as complex agent interactions can quickly drive up expenses. As Yohei Nakajima insightfully points out:

"The frameworks that win long-term won't be the ones with the most features. They'll be the ones that make the common patterns trivial and the uncommon patterns possible."

These frameworks aren't just about generating code - they also transform how teams collaborate on AI-driven projects. For those comparing features, pricing, and integration options, the AI Tools Directory is a valuable resource, offering in-depth analyses and real-time updates to keep you informed in this fast-changing field. By 2025, 68% of production AI agents are expected to be built on open-source frameworks, making access to reliable evaluation tools even more critical.

After thorough testing and careful evaluation, your chosen framework will serve as the foundation for efficient, maintainable teamwork. The options discussed here represent the forefront of collaborative AI development in 2026. Match your selection to your team's expertise, test extensively, and resist the temptation to over-complicate with multi-agent systems unless absolutely necessary.