Loading...

AI Operating Systems (AI OS) are the next big step in enterprise AI. Unlike standalone AI tools or agents, an AI OS acts as the central hub, enabling collaboration, memory sharing, and intelligent orchestration across systems. Early adoption of AI OS platforms is critical for businesses to reduce costs, improve productivity, and maintain control over their processes.

Key takeaways:

The bottom line: AI OS is more than just a tool - it's the framework for the future of enterprise AI. Companies that act now will lead in efficiency and innovation, while those that delay risk falling behind.

Explore AI Tools on Flaex.ai

Discover the best AI tools for your workflow, curated, reviewed, and ranked.

Browse Directory →

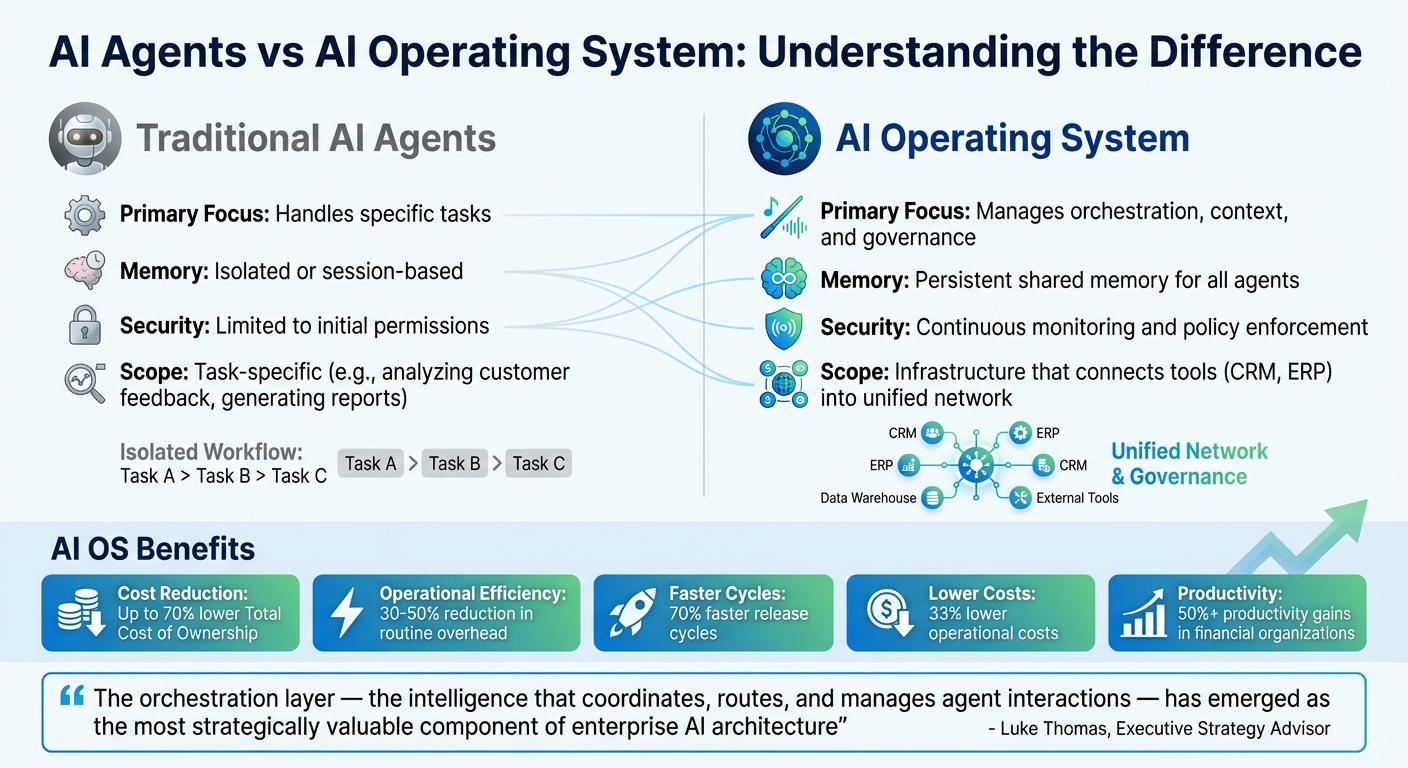

AI Agents vs AI Operating System: Key Differences and Benefits

An AI Operating System (AI OS) is essentially a software layer that oversees and manages multiple AI systems within an organization. Think of it as the central hub that ensures AI agents collaborate effectively, share resources, and operate within set guidelines. It’s not just about running AI tools but about orchestrating them to work together seamlessly, giving businesses a competitive edge.

Unlike standalone AI tools, an AI OS handles more complex tasks. It organizes fleets of autonomous agents, maintains a shared memory for context, and optimizes resource allocation using advanced methods like parallel data processing. It also integrates security and governance features, such as real-time compliance enforcement, audit trails, and safeguards like personal data redaction. Another key function is managing AI model lifecycles, allowing for version updates and easy switching between providers.

Luke Thomas, an Executive Strategy Advisor, highlights the growing importance of this layer:

"The orchestration layer - the intelligence that coordinates, routes, and manages agent interactions - has emerged as the most strategically valuable component of enterprise AI architecture".

This orchestration capability is what differentiates an AI OS from individual AI agents, making it a cornerstone of modern AI infrastructure.

The difference between an AI OS and individual AI agents lies in their roles. AI agents are task-specific, designed to handle jobs like analyzing customer feedback or generating reports. An AI OS, on the other hand, provides the infrastructure that allows these agents to function efficiently. It connects tools, maintains a shared memory, and enforces governance, creating a cohesive system.

An AI OS acts like the "connective tissue" of an organization’s tech ecosystem, linking tools like CRM, ERP, and other systems into a unified network. This integration enables smoother workflows and better interoperability.

| Aspect | Traditional AI Agents | AI Operating System (AI OS) |

|---|---|---|

| Primary Focus | Handles specific tasks | Manages orchestration, context, and governance |

| Memory | Isolated or session-based | Persistent shared memory for all agents |

| Security | Limited to initial permissions | Includes continuous monitoring and policy enforcement |

The benefits of adopting an AI OS are already evident. For example, large teams using models like GPT-4 often face monthly cloud API costs exceeding $50,000. By implementing local AI OS solutions, these costs become more predictable. Additionally, organizations report a 30–50% drop in routine operational overhead, such as code reviews and log analysis, after deploying an AI OS. As Fluid AI puts it, "AI OS isn't just a product category - it's a paradigm shift toward systems that think, coordinate, and act autonomously".

The current landscape of AI Operating Systems (OS) offers a range of platforms designed to meet specific operational needs. Knowing these options can help organizations find the right fit for their technical goals and infrastructure.

Agentic Operating Systems focus on managing networks of autonomous AI agents at an enterprise level. These platforms handle coordination, enforce compliance, and oversee resource management across multiple agents.

Take aiXplain Agentic OS, for example. It uses a micro-agent design with specialized components like the "Mentalist" for breaking down goals, the "Orchestrator" for task execution, the "Inspector" for monitoring compliance, and the "Bodyguard" for security. This setup ensures secure and adaptable deployment options.

Another example is Opulent OS 2.0, launched in September 2025. This platform features tools like Interactive Workflow Planning, which allows users to review and adjust execution plans before agents run them, and Secure Sandbox Execution, which isolates code in containerized environments to prevent security risks.

For teams aiming to build custom solutions, OpenDevin offers an open-source platform for autonomous software engineering. It supports multi-agent planning and provides sandboxed shell access, making it ideal for tasks like code automation.

While agent-based systems are one approach, some platforms position AI models themselves as the core operating system.

In this model, the AI's context window acts as operating memory, with external storage providing additional capacity. APIs function as peripherals, extending the model's ability to interact with external systems.

NeuralOS is a prime example of this concept. It serves as a local-first desktop environment for developers, managing GPU schedulers and model registries while keeping data on-premises. This addresses concerns like cloud latency and regulatory compliance, especially in industries like healthcare and finance. In October 2025, a fintech company used NeuralOS to automate incident triage for over 200 microservices, cutting mean-time-to-resolution by 43% in six months by integrating it with existing systems and human-approval workflows.

This approach contrasts with full-stack solutions, which offer a more comprehensive integration of computing, storage, and networking.

Full-stack AI platforms combine all critical components into a unified system, simplifying the complexities of GPU management and enabling seamless access to data across various environments, including edge, cloud, and on-premises.

For example, VAST AI OS integrates three main components: DataStore for storage, DataBase for transactions, and DataEngine for computing. This setup minimizes I/O bottlenecks and supports massive datasets with nearly 100% uptime for GPU workloads. It also supports protocols like NFS, SMB, S3, SQL, and Kafka-compatible streaming, with deployment options across platforms like AWS, Azure, GCP, Oracle, and edge environments.

Another option is Sealos, which provides multi-tenant Kubernetes, cost isolation, and an application marketplace for tools like model servers and vector databases. It’s particularly useful for organizations building custom AI OS solutions on reliable infrastructure.

Even consumer-focused solutions are emerging. Xiaomi's HyperOS integrates on-device multimodal capabilities with IoT orchestration, enabling seamless, intent-driven device management. Meanwhile, a German automotive supplier using CognOS on edge devices achieved a 99.2% prediction accuracy for critical failures, saving an estimated $4.8 million annually by preventing downtime.

The variety of these platforms highlights the market's experimental phase, offering businesses the flexibility to choose between agent-based systems, model-driven architectures, or full-stack solutions based on their needs and priorities.

Getting ahead with an AI OS early allows companies to take charge of their operational DNA. By owning the orchestration layer - the system that manages and coordinates AI agents - you ensure that your unique process innovations remain proprietary. Relying on external vendors for this layer risks turning your competitive edge into their training data, effectively commoditizing your advantage.

"This isn't middleware. This is your company's operational DNA translated into executable intelligence." - Luke Thomas, Executive Strategy Advisor

Early adopters enjoy substantial operational and cost benefits. These include 30–50% reductions in routine overhead tasks like log analysis and documentation, along with faster development cycles that drastically shorten time-to-market.

Unlike traditional software, which often requires costly upgrades every 3–5 years, an AI OS evolves seamlessly. It integrates new model capabilities automatically, eliminating planned downtime and shifting spending from unpredictable capital expenditures to more manageable operating expenses.

These efficiencies create a solid foundation for companies to seize opportunities in emerging markets.

Several trends are driving the urgency to adopt AI OS platforms early.

First, consolidation is replacing fragmented solutions. Businesses are moving away from piecing together disconnected tools like chatbots and classifiers. Instead, they’re opting for unified platforms that provide consistent policies and audit trails across the organization. By 2027, it’s estimated that over 40% of enterprise knowledge work will flow through AI-first systems.

Second, frontier model providers are climbing the value chain. Companies such as OpenAI and Anthropic are no longer just offering models - they’re becoming platform operators and application vendors. Organizations that don’t control their orchestration layer risk higher switching costs and shrinking margins as their process intelligence becomes locked into vendor ecosystems.

Lastly, regulatory demands are pushing for localized architectures. Industries like healthcare, finance, and government face increasing pressure to ensure data residency. This is leading to a preference for on-premises AI OS platforms that safeguard sensitive data while retaining intelligence internally.

In today’s competitive landscape, creating an effective AI OS isn’t just an option - it’s a necessity. Here’s how you can approach it step by step.

The first step in building your AI OS is selecting the right components across its five core layers: Cognitive Core (reasoning), Perception Layer (input processing), Action Layer (execution), Policy & Safety (guardrails), and Experience Surface (human interface). The AI Tools Directory is an invaluable resource for this process. With over 1,527 AI tools to explore, you can filter by categories like automation, development, audio, and video, and compare vendor-neutral options based on cost-performance and compatibility with standardized protocols. These protocols transform APIs into composable interfaces for seamless integration.

For the Cognitive Core, consider using a local execution engine like Ollama or vLLM, paired with a foundation model such as Llama 3.1 or Mistral. Running these models locally on GPUs with 12–16GB of VRAM can significantly reduce API latency and mitigate risks of data leakage. For the Action Layer, tools like CrewAI, LangChain, or OpenDevin are excellent for executing commands across shells, APIs, and UI automation. To ensure robust guardrails in the Policy & Safety layer, leverage Open Policy Agent (OPA). Finally, frameworks like Next.js, Tauri, or Electron can help you build user-friendly interfaces for the Experience Surface.

"There's not an operating system for everything to plug into … things need to be built for a world of AI in order for that AI to work and scale." – Adrian Aoun, CEO at Forward

Thoughtful component selection is the cornerstone of a secure and compliant AI OS, setting the stage for the next critical steps.

A secure and compliant AI OS requires a modular structure divided into four planes: Control, Data, Trust, and DevEx. This setup ensures scalability and governance as your system grows. Start by implementing a zero-trust architecture using mutual TLS (mTLS) for agent communication and sandboxing to restrict resource access. For systems handling multiple users, multi-tenant isolation - like Row-Level Security (RLS) - adds an extra layer of protection.

For industries such as healthcare or finance, an on-premises "sovereign" architecture is often essential. This approach eliminates external API dependencies, ensuring compliance with data residency rules and avoiding vendor lock-in. Before processing any data through your foundation models, route it through a dedicated layer that redacts, masks, or hashes Personally Identifiable Information (PII). Additionally, safeguard against runaway processes by incorporating kill-switches, such as runtime limits and quota management. These measures can prevent unexpected expenses, such as cloud bills exceeding $50,000 per month.

Deploying your AI OS on Kubernetes with Helm charts and GitOps tools like ArgoCD can help manage resources effectively. Use horizontal pod autoscaling to maintain high availability, and adopt policy-as-code practices - such as OPA-style policies with hash-chained audit trails - for cryptographic verification of every action. These strategies not only secure your system but also enhance operational efficiency.

Once the core functions of your AI OS are in place, focus on continuous improvement by integrating new technologies.

Design your system for flexibility with "hot-swappable" intelligence, using contract-first tool APIs and A/B routing. This setup allows you to replace models or kernels effortlessly, avoiding vendor lock-in. An AI-agnostic router can ensure uptime and optimize costs by dynamically switching between providers like OpenAI, Anthropic, or Mistral.

Centralize essential services through an AI App Server that standardizes common functions, such as short- and long-term memory, hybrid retrieval-augmented generation (RAG), safety filters, and evaluation frameworks. For sensitive tasks, like financial transactions or database migrations, integrate Human-in-the-Loop (HITL) workflows that require cryptographic proof of approval. To maximize interoperability, select tools supporting the Model Context Protocol (MCP), and streamline agent collaboration with modular memory handling, shared memory pools, and layered caching.

As your system evolves, implement changes without disrupting operations. Start with a 2–4 week audit to assess capabilities, then pilot a high-value service over 3–6 months to demonstrate ROI. From there, scale your system over 6–12 months by refactoring domain logic and integrating additional services. This phased approach helps you adapt to new needs while maintaining stability and performance.

Adopting an AI OS early offers a range of benefits that go far beyond just automation. From cutting costs to gaining a competitive edge, businesses that invest in this technology position themselves for long-term success. Building on the technical efficiencies discussed earlier, these advantages highlight why early adoption is a smart move for companies aiming to lead their industries.

One of the clearest benefits is financial efficiency. By integrating an AI OS, companies can avoid the costly cycle of system overhauls every 3–5 years. Instead, they benefit from automatic updates to foundational models, which can reduce the Total Cost of Ownership by 50–70% compared to traditional IT refreshes. Additionally, businesses experience 70% faster release cycles and 33% lower operational costs thanks to agent-based automation. For example, a fintech company using NeuralOS reported a 43% reduction in resolution time. Similarly, a German automotive supplier implemented CognOS and achieved 99.2% accuracy in predicting critical failures, saving approximately $4.8 million annually.

AI OS adoption also strengthens market positioning. Take Walmart, for instance. In 2021, the company launched an AI pilot to negotiate supplier contracts for items like shopping carts and fleet services. The results? A 3x higher success rate in closing deals, 3% cost savings, and extended payment terms to 35 days. IKEA offers another compelling example. Between 2021 and 2023, their AI chatbot "Billie" handled 47% of all customer inquiries. This allowed IKEA to retrain 8,500 call center employees as remote interior design advisers, shifting their roles from routine support to more specialized, value-driven tasks.

The productivity boost from AI OS adoption is another game-changer. Financial organizations that redesigned workflows around AI-driven platforms saw productivity jump by over 50%. Customer service teams using generative AI reported 14% productivity gains. Sectors like finance and IT, which heavily rely on AI, experienced 4.8x faster labor productivity growth compared to those with less AI exposure. An AI OS also bridges skill gaps quickly - new employees can match the performance of seasoned workers in just 3 months instead of 10.

Another key advantage is operational control and flexibility. Companies that manage their own orchestration layer retain control over their unique processes and prevent them from becoming training data for external vendors. By using standardized protocols, an AI OS enables businesses to switch AI providers without needing custom code, ensuring vendor flexibility. As Christina Inge from Harvard Division of Continuing Education puts it:

"Your job will not be taken by AI. It will be taken by a person who knows how to use AI".

Early adopters not only gain these immediate benefits but also build the expertise and control needed to maintain their leadership in the market.

The shift from AI agents to an AI OS is transforming how businesses integrate intelligence into their operations. Companies that act swiftly can seize control of their orchestration layer - the operational framework that dictates how work flows across the organization. As Luke Thomas aptly states:

"The divergence point is now. Once orchestration dependencies are established, extraction becomes exponentially more difficult".

Postponing adoption hands control to third-party vendors and risks lagging behind competitors.

Projections highlight a stark productivity gap, with analysts forecasting a 4-to-1 disparity by 2027, emphasizing the importance of early action . Early adopters are already experiencing measurable benefits, including 70% faster release cycles and 33% lower operational costs. These figures make a compelling case for immediate, data-backed strategies.

To begin, consider launching a 90-day pilot targeting high-volume, rule-based workflows. Use resources like the AI Tools Directory to identify reliable components, form a cross-functional AI council for governance, and deploy agents in shadow mode before granting them full autonomy .

While having a strong technical foundation is critical, the real competitive edge lies in owning your orchestration layer. Protect this layer by implementing controlled gateways to manage external providers. Adopt standardized protocols, such as the Model Context Protocol servers to maintain flexibility - this ensures you can switch between models and vendors without overhauling your codebase. Design your AI OS with adaptability in mind, so it can seamlessly integrate advancements in foundation models and emerging capabilities without requiring disruptive overhauls.