Loading...

AI systems in 2026 rely on two key components: AI agent skills and skills graphs. Together, they improve performance, efficiency, and workflow management for advanced automation. Here's what you need to know:

Key takeaway: Combining these two approaches creates smarter, more reliable AI systems by leveraging task expertise (skills) and structured execution (graphs). This dual-layer system is reshaping how AI workflows are designed and executed.

Explore AI Tools on Flaex.ai

Discover the best AI tools for your workflow, curated, reviewed, and ranked.

Browse Directory →AI agent skills are modular units designed to handle specific tasks. Unlike standard tools that execute single API calls, these skills manage entire workflows. They include built-in logic to determine when to start, how to proceed, and when to stop. Each skill follows a structured framework - covering applicability, execution, termination, and a reusable interface - to enhance how agents manage tasks.

These skills act like a procedural memory system, automatically activating proven workflows when specific conditions are met. It's similar to how humans recall expertise from memory. For instance, in February 2026, the SkillsBench evaluation tested 7,308 agent workflows across 84 tasks and revealed notable performance differences. On the "mario-coin-counting" task, success rates soared from 2.9% to 88.6% - an increase of 85.7 percentage points - when curated skills were applied. Conversely, the "taxonomy-tree-merge" task saw a drop of 39.3 percentage points.

Data also shows that human-curated skills consistently outperform those generated by agents themselves. When agents attempted to create their own procedural workflows, performance dipped by an average of 1.3 percentage points. This highlights how structured skill execution improves task efficiency.

By automatically activating skills, workflows become more efficient through progressive disclosure - a method that loads information only as needed. This approach prevents overwhelming the system with unnecessary details. For example:

This structure minimizes unnecessary data processing. In January 2026, researchers at the University of Tartu demonstrated that their AgentForge framework achieved sub-100ms orchestration delays while completing 87.3% of web scraping tasks and 91.2% of data analysis tasks.

Efficiency also extends to model performance. Smaller models equipped with curated skills can match or surpass larger models without them. For example, Gemini 3 Flash, using skills, outperformed Gemini 3 Pro without skills while cutting per-task API costs by 44%. Research suggests that using 2–3 focused skills yields the best results. Adding more than three skills can introduce cognitive overload, reducing effectiveness by 2.9 percentage points.

Effective integration of these skills requires robust state management and error handling. In February 2026, Lubu Labs launched langchain-agent-skills, an open-source repository. Their system uses explicit state schemas and reducers (like operator.add or custom logic) to manage data flow across multi-agent workflows. This ensures state persistence across multiple steps, preventing single-skill failures from disrupting the entire process.

Security is another critical challenge. In February 2026, the ClawHavoc campaign exposed vulnerabilities when nearly 1,200 malicious skills were distributed via an agent marketplace to steal API keys and browser credentials. This incident highlights the need for trust-tiered execution environments and rigorous validation of skill sources before deployment.

A skills graph serves as a framework that defines how AI agents collaborate. Instead of relying on hard-coded decisions, execution paths are outlined in formats like JSON or YAML. This setup forms the orchestration layer, connecting skill execution with flexible workflow management. The graph engine oversees sequencing, branching, and coordination, allowing agents to focus solely on their designated tasks.

Skills graphs rely on applicability gates to decide when a particular skill should activate. Each skill includes a condition (C) to evaluate its relevance to the current goal or observation. Once triggered, the skill enters "Skill Mode", taking exclusive control of the interaction and maintaining its internal state until a termination condition is met. If an agent generates low-confidence data, the graph redirects the task to a human, a retry mechanism, or a fallback agent.

This setup reflects the idea of progressive disclosure, keeping the context streamlined and relevant.

Building on this adaptability, real-world systems enhance workflows through declarative orchestration.

These dynamic capabilities lead to noticeable workflow improvements, as demonstrated by recent industry examples.

In February 2026, Alexander Shereshevsky introduced a declarative agent orchestration engine for social analytics using LlamaIndex. By replacing a hard-coded Python pipeline with a JSON-defined graph, his system enabled automatic parallelism and probabilistic outputs ready for simulation - without altering the core code. Shereshevsky explained:

Instead of writing a pipeline, we wrote a graph definition - a JSON file that declares which agents exist, what they produce, and what they depend on.

Orcaworks, on the other hand, developed "Orca", a digital coworker that uses governed graphs to automate operations and ensure compliance. The system manages classification, evidence extraction, and rule evaluation across multiple platforms, ensuring that every step follows approved logic for audit purposes. This declarative approach simplifies the addition of new capabilities by updating the graph definition rather than rewriting functions.

Shifting from traditional pipelines to skills graphs involves explicit state management. Advanced systems adopt a "Subject-Object" memory architecture, where the "Subject" tracks long-term goals and constraints, while the "Object" adjusts as the conversation evolves. Frameworks like LangGraph require clear state schemas and reducers (e.g., operator.add) to prevent data loss during multi-agent interactions.

Security remains a major concern. An analysis of 42,447 community-contributed skills revealed that 26.1% contained vulnerabilities, such as prompt injection and data exfiltration. Additionally, skills bundling executable scripts were found to be 2.12 times more likely to have vulnerabilities compared to instruction-only skills. To address these risks, organizations should adopt a four-tier governance model, which includes static analysis, semantic intent classification, behavioral sandboxing, and permission manifest validation.

Another challenge, called "routing collapse", occurs when a single costly agent is repeatedly invoked, reducing selection accuracy. As skill libraries grow, this issue can worsen, leading to performance drops. Using skill-aware orchestration systems can significantly cut learning costs - by 300x to 700x - when compared to traditional reinforcement learning-based routers.

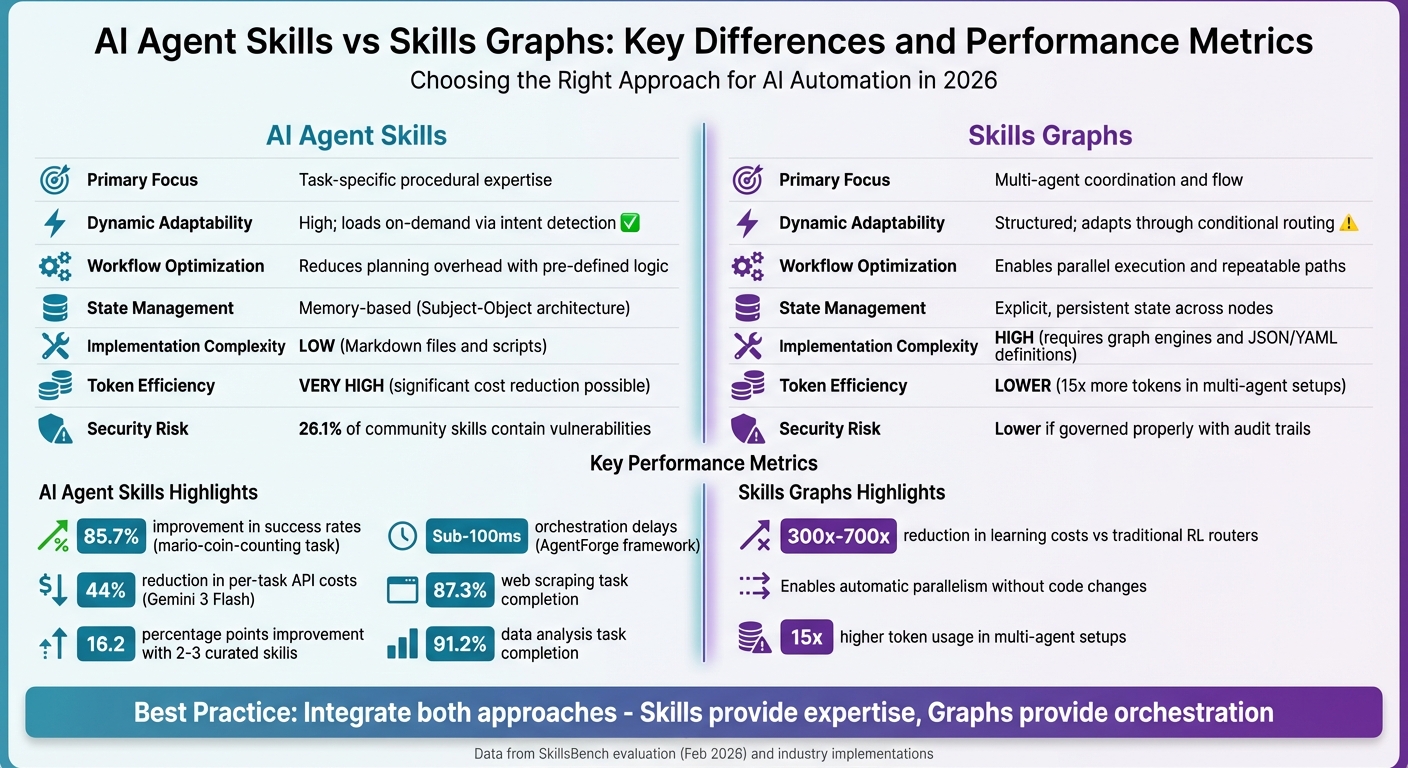

AI Agent Skills vs Skills Graphs: Key Differences and Performance Metrics

Both AI agent skills and skills graphs offer distinct strengths and challenges when it comes to automation. By examining their key features, you can better understand which approach aligns with your specific needs. Here's a closer look at how each method tackles automation challenges.

AI agent skills shine when it comes to modular, task-specific expertise that activates only when necessary. This approach, often called "progressive disclosure", can drastically reduce system prompt token costs. Instead of overloading the context window with every instruction upfront, only the relevant skills are engaged. Studies suggest that using 2–3 well-curated skills improves task performance. However, security is a notable concern, as community-contributed skills may introduce risks like prompt injection or data leaks; using secure AI sandboxes can help mitigate these infrastructure vulnerabilities.

On the other hand, skills graphs excel in managing complex, multi-agent workflows where control and transparency are critical. These graphs allow for parallel processes, conditional logic, and fallback mechanisms, ensuring systems remain resilient in unpredictable scenarios. As Orcaworks aptly states:

Intelligence alone is not enough. What matters is how that intelligence is structured, governed, and executed over time.

The downside? Skills graphs come with higher implementation complexity. They require tools like graph databases (e.g., Neo4j), orchestration engines, and clearly defined state schemas. Additionally, multi-agent graph systems can use up to 15 times more tokens compared to simpler single-agent approaches.

| Criterion | AI Agent Skills | Skills Graphs |

|---|---|---|

| Primary Focus | Task-specific procedural expertise | Multi-agent coordination and flow |

| Dynamic Adaptability | High; loads on-demand via intent detection | Structured; adapts through conditional routing |

| Workflow Optimization | Reduces planning overhead with pre-defined logic | Enables parallel execution and repeatable paths |

| State Management | Memory-based (Subject-Object architecture) | Explicit, persistent state across nodes |

| Implementation Complexity | Low; Markdown files and scripts | High; requires graph engines and JSON/YAML definitions |

| Token Efficiency | Very high; significant cost reduction possible | Lower; 15x more tokens in multi-agent setups |

| Security Risk | Vulnerabilities present in community skills | Lower if governed properly with audit trails |

Ultimately, the choice between these two approaches depends on the scale and control requirements of your system.

Each method brings its own strengths to the table, making it essential to align your choice with your specific goals and operational priorities.

The most effective AI systems in 2026 don’t force a choice between agent skills and skills graphs - they integrate both seamlessly. Skills bring task-specific expertise, while graphs provide the framework to decide when and how to apply them. Abhilash Samantapudi from Glean explains it well:

Skills are units of expertise, and agents are end-to-end workflows that decide when and how to apply those skills.

This combination addresses a major hurdle: the overwhelming focus on orchestration and tool design in agent development. Currently, 95% of the effort goes into these areas rather than straightforward API calls. By encapsulating domain knowledge into reusable skills and coordinating them through structured graphs, developers can create systems that are not only smarter but also easier to manage.

To put this into action, start by breaking workflows into smaller, manageable subtasks and organizing them as curated skills - this approach has been shown to improve task pass rates by 16.2 percentage points. Then, use graph-based architectures to handle dependencies, error management, and state tracking across steps. Adding a critic node, where a supervising agent checks outputs against the overall system state, can further boost reliability.

The growing adoption of these methods highlights their potential. By early 2026, the open-standard Agent Skills repository had earned over 20,000 GitHub stars, reflecting the widespread interest in this approach. As ImagineX, an AI developer and author, aptly puts it:

"The essence of programming is shifting from 'writing' to 'orchestrating'".

With this shift, your role evolves from writing every individual step to designing challenges that agents solve using the right blend of skills and structured execution. This marks a transformative leap in how we think about and build AI systems.